Original Zhu Haochuan Chen Zhijia Shanghai Law Society Oriental Law

In essence, the generative artificial intelligence referee is that the algorithm researchers use neural network to generate the mapping relationship between the elements of the case and the referee results as a function expression, and then input the new case elements into the function and judge the output results. There are three technical reasons why the referee of generative artificial intelligence can’t use reason: first, there are few samples to train artificial intelligence, and artificial intelligence can’t generate the functional expression of reason; Secondly, the complexity of the meaning of reason and the particularity of the applicable field have deepened the difficulty of digitizing case materials; Thirdly, the entanglement between reason and jurisprudence in judicial adjudication makes it more difficult for artificial intelligence to interpret and apply reason. Sense and reason are irreducible in language and value in original materials and cannot be accepted by artificial intelligence. Generative artificial intelligence has no self-awareness, so it can’t really understand and use reason.

First, the question raised

The definition of artificial intelligence is complicated and has different meanings in different technologies and scenarios. Generally speaking, artificial intelligence is the general name of science and technology that imitates human intelligence. It aims to simulate, extend or expand human intelligence by using automated machines or computers, and endow automated machines or computers with the ability to realize human thinking activities or mental work. At present, artificial intelligence has developed to the stage of 4.0, which can not only help human beings to process massive data, but also make initial thinking and actions like human beings. However, the development of artificial intelligence technology also means many risks and challenges, and social problems caused by artificial intelligence emerge one after another, which has been widely concerned by academic circles.

This paper focuses on the application of artificial intelligence in judicial adjudication. Many legal scholars are optimistic about AI judicature. Some people think that "artificial intelligence can directly judge instead of judges", and artificial intelligence adjudication is the legal expression of digital justice, which provides theoretical explanation and method support for justice. Even with a cautious attitude, the technology of artificial intelligence referee is expected to be mature, and it is considered that whether artificial intelligence referee is suitable is a question of human value choice. Some scholars expressed concern about the algorithmic risks of artificial intelligence referees, and suggested that artificial intelligence referees should be regulated and supervised by law.

With the rapid development of AI judicial research, the neglect of artificial intelligence ontology has become more and more obvious. A large number of researches are eager to occupy the highland of judicial application without knowing what artificial intelligence ontology is, and are trapped in the atmosphere of representational research. However, the criticism of the current situation of AI judicial research is not intended to deny the value of artificial intelligence in judicial application, but to expect legal scholars to turn from the "reality" of artificial intelligence to the "shape" of artificial intelligence in judicial application. It is worth noting that judicial activities provide rich materials for the development of AI justice. The performance of artificial intelligence in judicial activities will help legal scholars to clarify the ontology of artificial intelligence and further study the judicial application of artificial intelligence.

Based on the above work, this paper tries to put forward and answer the core question of this paper: can generative artificial intelligence enter the field of judicial application? It should be noted that the artificial intelligence discussed in this paper is limited to generative artificial intelligence. First, from the understanding of artificial intelligence, generative artificial intelligence is a technology that can learn independently and generate media such as images and sounds that are fake, and ChatGPT, which has been widely discussed recently, is such a representative. This technology belongs to the scope of strong artificial intelligence, which is different from artificial intelligence under "mechanical automation" such as voice input and online retrieval of judgment documents. For the convenience of discussion, this paper generally does not distinguish between generative artificial intelligence and artificial intelligence in expression. Secondly, from the significance of discussion, weak artificial intelligence (or artificial intelligence under "mechanical automation") is not enough to challenge judicial practice, and it is often positioned as an auxiliary tool for judicial trial in judicial practice. Because of its "automatic" communication ability and creative ability, generative artificial intelligence can not only imitate human activities to create texts, sounds and images, but also improve its generative ability in communication with people. This challenges human’s understanding of "consciousness", and in the judicial field, there is a call to judge instead of judges. The discussion on this issue has practical significance.

Second, the observation and analysis of artificial intelligence referee

For the needs of judicial practice, some countries outside the country have developed artificial intelligence legal expert systems, which simulate legal professionals to conduct legal reasoning based on existing rules and a large number of cases to find solutions to new legal problems. Artificial intelligence judgment is the activity that judges input the case materials into the legal expert system, adopt or listen to the results obtained by the legal expert system, and make judicial judgments.

(1)

Practical exploration of artificial intelligence referee

Up to now, the realization of artificial intelligence judgment is mostly to input pending cases into a large number of decided cases. By comparing the cases with high similarity in the case group, the judgment methods of the judgment precedent and the applicable provisions of legal rules are introduced into the pending cases, and the judgment results are predicted.

It is reported that artificial intelligence referees have higher prediction accuracy than legal professionals. Case Cruncher Alpha, an artificial intelligence lawyer, competed with 100 elite lawyers in London, England on "Predicting whether the Financial Ombudsman will allow claims based on the basic facts of hundreds of PPI (Payment Protection Insurance) improper sales cases". They submitted a total of 775 predictions, and the results showed that the prediction accuracy of Case Cruncher was 86.6%, while the accuracy of lawyers was only 66.3%. Illinois University of Technology and South Texas Law School built a mathematical model using random forest algorithm to predict the decision of the Supreme Court of the United States from 1816 to 2015. The prediction accuracy rate was 70.2% at the case outcome level and 71.9% at the judicial voting level. Katz, the author of the prediction model, said that the model is different from other models because it can be applied to the whole past and future of judicial decision-making without samples. In view of the excellent computing power of artificial intelligence, DoNotPay has created a chat robot that provides legal services. Through dialogue with it, the robot can help the helper to better negotiate with laws and agreements in daily situations and safeguard their rights. In 2020, DoNotPay was awarded the Louis M. Brown Award by the American Bar Association in recognition of its "commitment to providing legal services to those with low incomes".

The above cases show that artificial intelligence has huge judgment resources and rich computing power, and it may have greater advantages than human beings in entering the field of judicial adjudication. In the judicial decisions of some foreign countries, the shadow of artificial intelligence is active. In the United States, courts in several states have used COMPAS, PSA and LSI-R, three major artificial intelligence risk assessment systems, to predict the probability of detainees committing crimes again, and to help judges decide whether to apply probation to detainees. Estonia uses the judicial artificial intelligence system to deal with small claims disputes with the target amount less than 7,000 euros. This helps judges and lawyers avoid complicated and trivial matters, and also makes Estonia rank second in the EU in terms of the speed of judicial decisions.

While artificial intelligence is widely used in the field of referee, scholars have raised concerns about the actual situation of artificial intelligence referee: First, due process issues. The algorithm of artificial intelligence referee is a trade secret, and the company responsible for the algorithm refuses to disclose the content of the algorithm or publish the factors considered when designing the algorithm. At the same time, there is no unified standard for the design of the algorithm. These will not be known to the defendant, but the "guilty verdict" generated by artificial intelligence often makes the defendant fall into an embarrassing situation of self-incrimination. Second, the problem of algorithm bias. Even if the algorithm model is struggling to accurately predict the defendant groups with similar characteristics, there are too many uncertainties for individuals, and personality characteristics are ignored by the algorithm.

(2)

Theoretical analysis of artificial intelligence referee

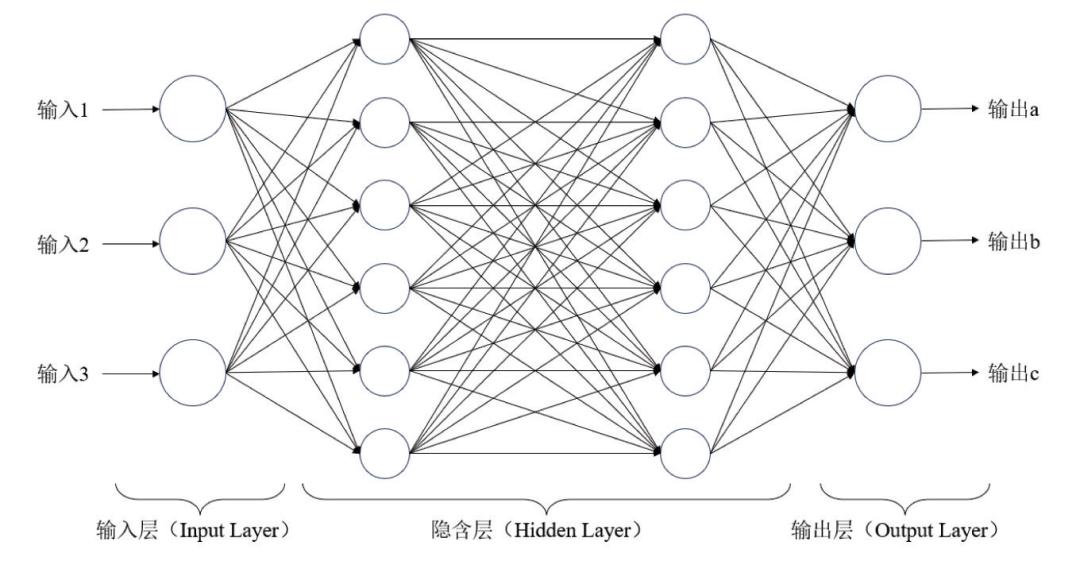

Any investigation of artificial intelligence must analyze the technical theory of artificial intelligence. At present, the construction of artificial intelligence in the scientific community mainly adopts the viewpoint of neural network school, which believes that human’s highly complex thinking ability, external learning and adaptability are all bred by a large number of nerve cells. Realizing artificial intelligence means training neural network through big data, simulating human learning process and giving machine wisdom.

The basic unit of neural network is neuron, and the basic model of neuron is MP model, which contains discriminant function. For a simple example, input the signal "Zhang San has illegally deprived others of his life", "Intentionally illegally deprived others of his life" and "Whoever commits the crime of intentional homicide should be sentenced to death" in the neuron model, and the neuron model will draw the conclusion that "Zhang San should be sentenced to death" through the discriminant function. The essence of this model reasoning is a deductive logic reasoning, and legal formalism provides a solid theoretical basis for the reasoning of neuron model, and expounds the applicable premise of artificial intelligence judgment. Specifically, in the closed and self-sufficient legal system, the reasoning transforms the behavioral elements of the actor in the case into a signal input model, and the model generates a judgment through functional discrimination according to legal norms. But in fact, this is just a beautiful idea of artificial intelligence referee, which is essentially different from the actual activities of artificial intelligence. For example, Menon et al. used Logistic regression algorithm, Bag of Words and RSLPS stem to predict judicial decisions with an accuracy of 78.02%. To put it simply, the model is to generate the mapping relationship between the elements of the case and the judgment result as a function expression of y=f(x), and then input the xi of the new case into the function to get the calculation result and judge it. This means that the judgment of new cases will be expressed according to the generated function instead of legal norms, which violates the mechanism of judge’s judgment in civil law countries.It also faces the legal torture of whether similar cases should be similar to judgments.

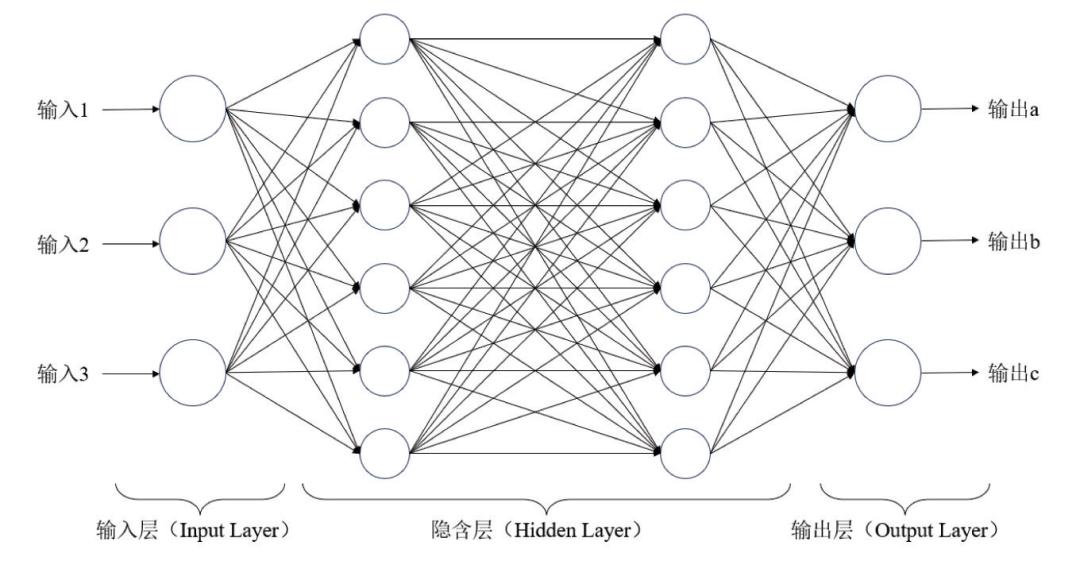

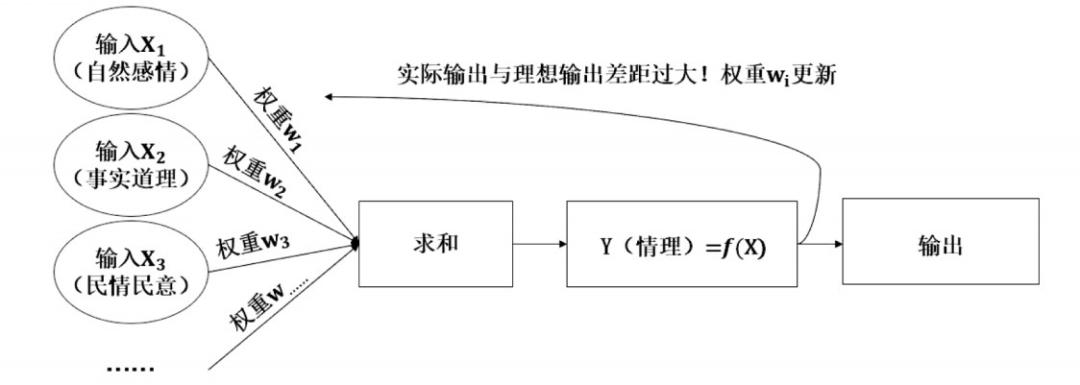

However, a single neuron model can’t be operated or not, and it can’t face the complicated problems of judicial practice. In order to further improve the prediction accuracy, it is necessary to establish a two-level or multi-level neural model to construct a judicial judgment model. A simple (feedforward) neural network model is shown in the following figure (Figure 1).

Fig. 1 artificial intelligence neural network model diagram

The model includes three basic structures: input layer, hidden layer and output layer, and information propagates in one direction in the model. The number of hidden layers can be expanded indefinitely, and each layer is connected by neurons. The intelligence of artificial intelligence depends on the number of nerves and the number of hidden layers. In the actual activities of artificial intelligence referee, artificial intelligence will ask the examinee’s age, family situation, beliefs, values, specific actions, etc., comprehensively consider the relevant factors, and calculate by function discriminant. Because different scholars adopt different natural language processing technologies and models, there are different accuracies accordingly. For example, Ahmad based on hybrid deep neural network (CNN+BiLSTM) predicted the judgment of judicial cases, and the accuracy of the hybrid model reached 91.52%. Kowsrihawat uses End-to-End deep learning neural network to predict judicial decisions, and its accuracy is 74.38%.

The use of information by human beings is complicated, and human beings may repeatedly use certain information to make judgments, or reflect on their own judgment activities through the judgment results. The error back propagation of neural network is simulating the feedback mechanism of human brain. If the actual judgment of artificial intelligence is not in good agreement with the result of the input case, the system will trigger its own "back propagation algorithm" to adjust the connection weight between the calculation units in the system, so that the output given by the system can be different from the previous output. Then, the system compares again. If the coincidence between the two is still not good, the system starts the back propagation algorithm again until the actual output and the ideal output are consistent with each other. It is worth mentioning that algorithmic discrimination is produced in repeated "feedback". More importantly, the existence of feedback chain makes the structure of neural network more and more complex and unknowable. For neural networks with dozens or even hundreds of layers, including tens of thousands of neural nodes, coupled with the self-learning mode of artificial intelligence, it is difficult for even designers to accurately understand how the parameters of each node in each layer are formed and what functions they represent. Compared with the algorithm designers who don’t disclose the content of the algorithm on the grounds of trade secrets, the "algorithm black box" produced by deep learning of artificial intelligence more strongly tortures the instinct of human beings to expect justice.

Third, how do artificial intelligence referees face "rationality"

Legal scholars who are committed to AI judicial research are optimistic about the deep learning ability of artificial intelligence, ignoring the question of whether the artificial intelligence referee itself can "apply justice". Even if artificial intelligence has the ability to accurately predict the results of judicial decisions, it shows better computing power than the human brain, which does not prove that artificial intelligence is enough to replace judges to undertake judicial decisions. The Supreme People’s Court put forward the requirements of "clarifying the facts, explaining the jurisprudence, explaining the reason and paying attention to the arts and sciences" for the interpretation and reasoning of judgment documents. The author will take "sense" as an example to discuss how artificial intelligence judges interpret "sense" and whether they can "explain sense".

(1)

How does artificial intelligence interpret the "sense" in the referee

In the theory of law, the concept of "sense" has rich connotations, which refers to the universal value criterion obtained by integrating emotional experience into the interaction process of social members. From the origin, reason originates from the instinctive feelings of animals, and is seen above feelings; From the perspective of substantive origin, reason exists in the general social order and is expected to be discovered by judicial workers in the case. From the perspective of judicial application, reason can be universally proved and used as the basis of quasi-judgment. Therefore, "sense" has both emotional attributes and factual and normative features.

In judicial practice, the judge will either turn it into natural feelings such as filial piety and love, or turn it into the truth of things, or turn it into the public opinion. A human judge must face a large number of cases before he can have a deeper understanding of "sense". Artificial intelligence has the ability to interpret "sense" based on the rapidity and repeatability of learning.

The core of understanding reason lies in the processing of natural language text by artificial intelligence. The essential feature of natural language is the internal computing mechanism, which produces unbounded structured phrases and sentence arrays. This shows that the expression of natural language is not chaotic, which provides the possibility for artificial intelligence to understand language. Because the real natural language is extremely complex, it cannot be directly processed by artificial intelligence. Scholars try to formalize the natural language and establish a "formal model" of the language, so that it can be expressed strictly and regularly in a certain mathematical form, and these texts are processed and analyzed by artificial intelligence.

For example, if the author inputs the content of a judgment document "but the statements before and after it are found in different places, and its explanation is unreasonable", artificial intelligence will first "segment" the words received. When it is found that "Chen" and "statement" in the sentence library are both "statements" with great probability, artificial intelligence will judge "statement" as a phrase, and other word segmentation processes will be the same. Secondly, artificial intelligence carries out part-of-speech tagging (tagging nouns, verbs, adjectives, etc.) and syntactic analysis (analyzing the subject, predicate, object, etc.) of the divided words. Thirdly, artificial intelligence will try to "semantically analyze" the text, and this activity is completed with the help of one or more models.

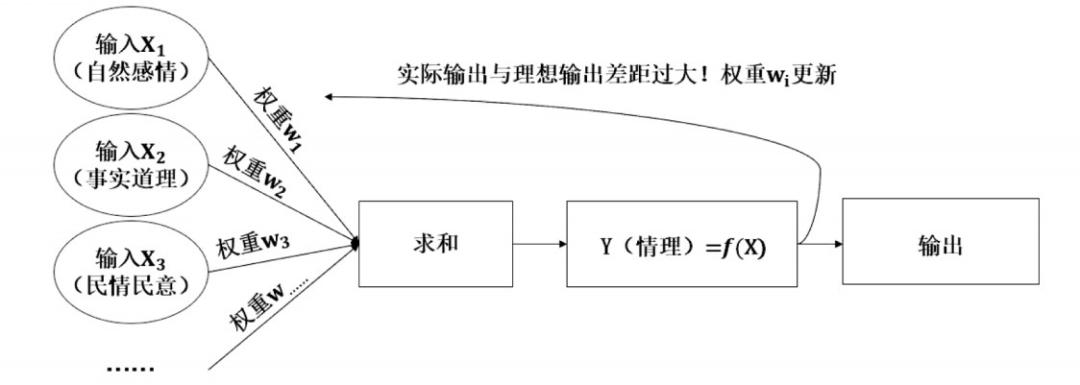

Artificial intelligence knows what it is dealing with, but it can’t really "know" like humans. In fact, the interpretation of the text of sense by artificial intelligence depends on the interpretation of sense by algorithm developers. Although different people have different understandings of sense, sense can be roughly disassembled into natural emotions, facts, people’s feelings and public opinions, etc. In essence, the understanding of sense by artificial intelligence is to give these elements weight (contribution distribution), and then feedback the difference of training results to neurons at all levels through error back propagation, give them revised weights, and repeat the process. (as shown in Figure 2) However, artificial intelligence can’t judge what sense is in the initial state, and the characteristics of sense are normative, which requires that the sense in the materials must be legal, and the interpretation of sense by non-judicial professionals will dispel the norm of sense. In addition, some judges rigidly apply legal logic and legal norms, which deviates from reason in judicial decisions. If the input of materials is not discriminated, artificial intelligence will recognize all the recognized input contents as true, which deviates from the correct track of understanding reason. In addition, R&D personnel can also observe the training process of artificial intelligence, and set and add new elements and weights.

Fig. 2 Schematic diagram of artificial intelligence interpreting "sense"

It is worth noting that the relationship between the elements of reason and reason is not limited to (x, y) point pairs, but (x, y) generalized point pairs composed of vectors and matrices. On the one hand, human beings are rich in emotions, which not only include affection, love, friendship, etc., but also produce different emotional contents such as emotions, joys and sorrows. For example, the relationship of "love" will not only lead to the love concept of "mutual help", but also easily lead to wrong behavior by love and hate; On the other hand. Social relations are quite complicated, and there may be two or more reasons in the same case that have conflicting effects on the verdict. For example, in the case of "driver’s injury to fellow passengers during the period of good intentions", the court’s application of reason not only considered the moral concept of "promoting helping others", but also included the fair requirement of "the injurer making up for the loss". Artificial intelligence must use multi-layer composite function and interpret reason with neural network model.

(2)

Can artificial intelligence use "sense" in referee?

The application of reason in judicial trial activities is mainly to evaluate facts or evidence. It can be inferred that reason often plays an auxiliary role in judicial operation and is not the direct basis for judges’ trial. Therefore, artificial intelligence can not explain its application or explain the reason by accurately predicting the outcome of the case. The author believes that there is a logical fracture between the construction of artificial intelligence model to understand reason and the application of reason in judicial activities. Combined with the process of interpreting reason by artificial intelligence, the author thinks that there are several technical reasons why artificial intelligence can’t use reason:

First, there are few samples for training artificial intelligence, which can’t help artificial intelligence to deeply understand and use reason. On the one hand, artificial intelligence simulates the use of reason by judges, which requires rich case materials. For example, when Al-Kofahi built an artificial intelligence system to extract and process court opinions and learn by himself, it recorded about 7 million cases in the database. When Spaeth et al. predicted the verdict of the Supreme Court, their data were based on all the judgments of the justices. However, the author uses "sense" as the key word of full-text retrieval on the online judgment documents. From 2012 to 2022, "sense" only appeared 145,799 times, which is far from enough as a training model. Some judges judge according to reason but don’t say the word "reason", and artificial intelligence can’t judge whether the judge has considered reason. For example, criminal judgment in the "Yu Deshui case" was praised by the media as "the most temperate judgment". The judge explained why the defendant Yu Deshui was given a lighter punishment with a superb grasp of reason, but the word reason was not included in the judgment.

On the other hand, it is common for judicial decisions to be unreasonable. The judge’s judgment lacks the support of the law of reason, and there are cases such as arbitrary law, ignoring common sense in argument, and simple and rude legal explanation; Or follow the principle of "rather simple than complicated", fearing that if you talk too much, you will lose, and others will seize the handle; Or there is no incentive for judges to reason because of system differences; There are even judges who know that bending the law has made a wrong judgment when it is unreasonable. The existence of such judgment documents has a negative effect on the interpretation and application of reason by artificial intelligence.

Then, can we use the data generated by artificial intelligence to train the prediction ability of artificial intelligence to solve the problem of insufficient referee samples? Shumailov found that this kind of training method with left foot and right foot is a kind of "curse recursion", which will make artificial intelligence "forget" the original data and cause the model to collapse, which also means that the data created by human beings can have the value of training artificial intelligence.

Secondly, the complexity of the meaning of reason and the particularity of the applicable field deepen the difficulty of case materials digitization. In some cases, the interweaving of multiple emotions complicates the case, which is difficult to be expressed by numbers, and the interpretation of case materials by artificial intelligence must be in mathematical form. According to the book "A Tortoise in a Broken Prison", two brothers both thought that a child was their own son, and Huang Ba, the county magistrate, let two sisters-in-law "compete for it". The child’s biological mother was afraid of hurting the child, so she didn’t dare to snatch it vigorously. This requires that in the trial process, we should not only consider the feelings of "the mother is afraid of her child being injured and does not dare to snatch it vigorously", but also balance the feelings of "the mother has to snatch her child for fear of losing her child under the order of competition". There are also many reasons that exist in the same case, and the case of "driver’s injury to fellow passengers during good intentions sharing" mentioned by the author is such a case.

Thirdly, the entanglement between reason and jurisprudence in judicial adjudication makes it more difficult for artificial intelligence to understand and apply reason. Generally speaking, there is a complex relationship between jurisprudence and reason. On the one hand, reason and jurisprudence are both external and internal, and complement each other. The stipulation of law should be found from the reason of daily social communication, and the reason in life is determined by law. On the other hand, the purpose of rationality is to adjust or restore the original social relations. Jurisprudence is characterized by formal rationality and achieves the effect of settling disputes through the logical application of legal norms. They are closely related, but they have different purposes. In the case of contradiction between jurisprudence and reason, how to balance the relationship between reason and jurisprudence, or use reason to choose jurisprudence, has higher professional requirements for judges. In the view of artificial intelligence, it is only a matter of probability to choose which angle to recognize and discuss, and it has no practical significance. Perhaps the algorithm designer has set a certain understanding and discussion angle for artificial intelligence, or artificial intelligence itself takes the judgment result of a case as the basis for measuring the "value" text, but this will easily lead to artificial intelligence-based mechanical justice. It is not only impossible to integrate jurisprudence and reason in the judgment, but also impossible to achieve case justice.

One possible solution is to use a set of argumentation forms to create an argumentation diagram for each input case, expecting artificial intelligence to conduct legal reasoning based on real cases in the database. However, the shortcomings of this method are also obvious, or all possibilities are exhausted in the process of creating the demonstration diagram, which will produce tens of thousands of artificial heavy work, or the evaluation of prediction performance is not strict statistically because of insufficient training base. A stricter rebuttal to this way is that the judicial trial can find supporting reasons without precedent or even outside the legal provisions, while artificial intelligence can only find supporting reasons in the entered provisions and cases. The judge’s interpretation of legal norms is not only based on the text content, but also adopts various interpretation methods that are not based on the provisions themselves, such as purpose interpretation and historical interpretation, in order to seek the connotation concept of fairness and justice in legal norms.

Fourth, philosophical reflection on the use of "sense" disability

The use of "reason" in artificial intelligence is disabled, which contains a deep refutation of the philosophical view that artificial intelligence does not have self-awareness. Although philosophy can’t answer how the algorithm should be designed, how to define "intelligence", how to conceive experiments to refute theoretical assumptions, and how artificial intelligence research depends on philosophy’s understanding of mind provide the possibility for philosophical discussion of artificial intelligence.

(1)

A fundamental refutation of disability by using reason.

Although the author has proved that artificial intelligence can not be used rationally, the above proof is only a defect of artificial intelligence’s technical application rationally. Reflecting on "sense" from a philosophical perspective will fundamentally refute the possibility of artificial intelligence using sense. In our country, the understanding of "sense" is often divided into "emotion+reason" mode. On the whole, the discourse system of "sense" includes moral order and emphasizes the reality and particularity of things, and "reasonable" means the combination of the above two factors.

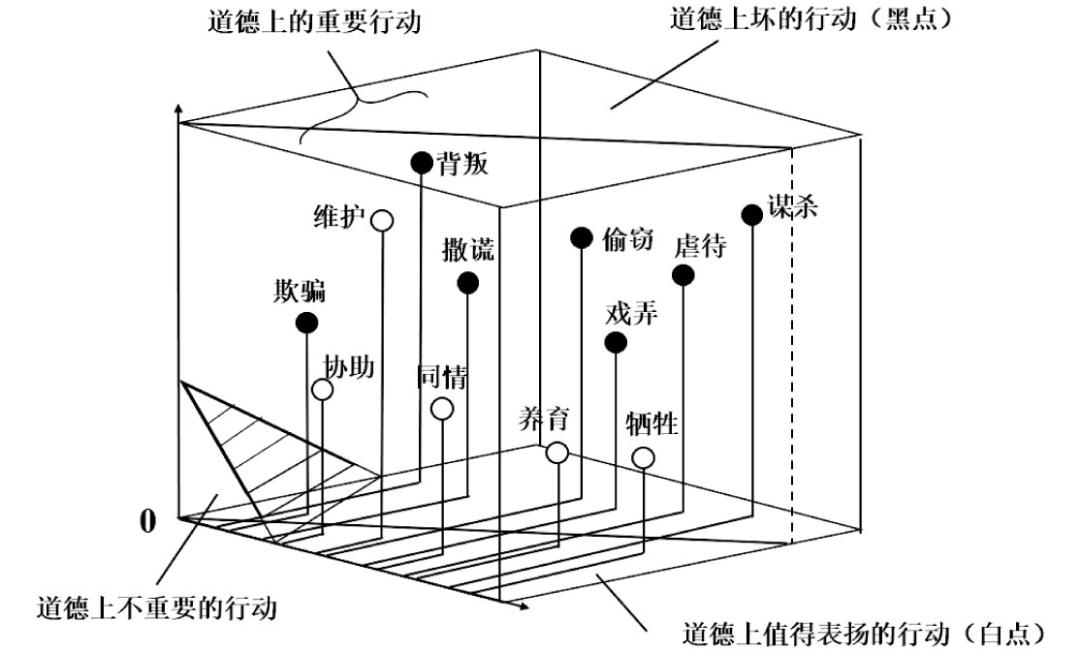

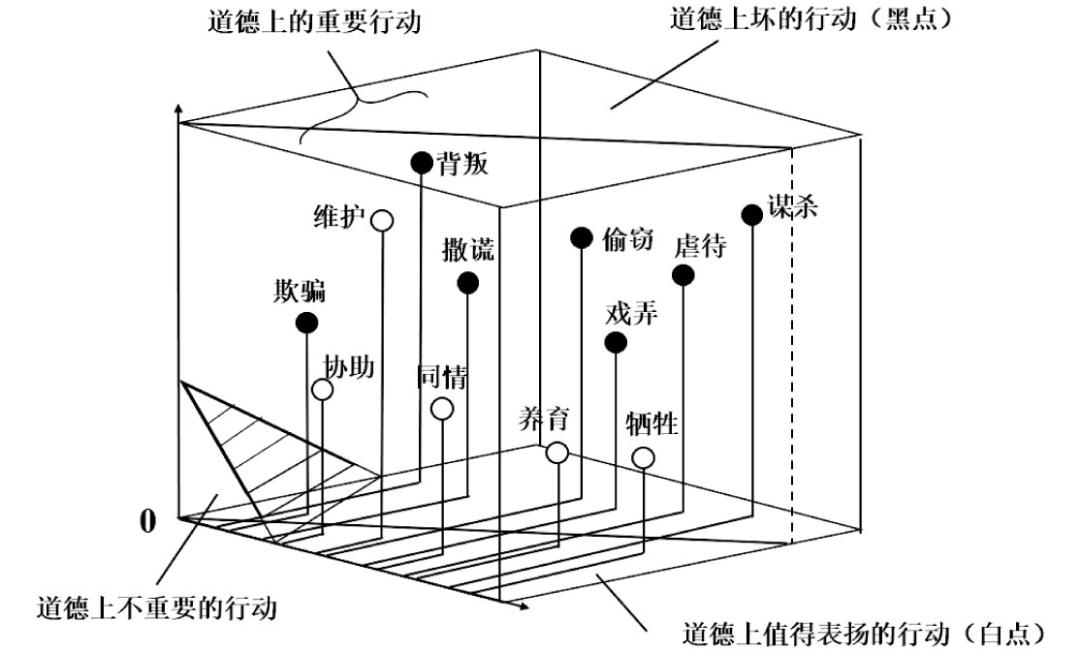

For artificial intelligence, if artificial intelligence embodies the usage of "sense" in the output content, it is not superficially different from human beings in "understanding" the meaning of sense. Churchland believes that the human brain will not constitute a theory or legal system of distributive justice, but at most it can only constitute a theory about how we produce, embody and apply these valuable cognitive achievements. In this regard, he conceived a neural network model that leads to cognition from the field of meta-ethics.

There are three steps to train the model: first, all the underlying descriptions of human behavior are fuzzified and input into the neural network, and the weights of nodes are adjusted. This process simulates that adults constantly understand their social and moral environment through perceptual skills; Second, divide the abstract space on the neural layer and divide it into a set of hierarchical categories in some proprietary neuron layers, such as "morally important" and "morally unimportant" behaviors; Thirdly, determine the position of each stimulated behavior point in the space. These structured spaces constitute acquired knowledge of the social space structure and how to effectively control the social space. (See Figure 3)

Fig. 3 vector space of moral identification envisaged by Churchland

Moral cognition and reason in Churchland model are similar, but there will be philosophical difficulties if we try to put reason into Churchland model. Artificial intelligence tries to generalize the scattered case data into a higher-level function of y=f(x), but the sense is often found outside the material. The wording of this quotation does not obviously bring a higher level of value evaluation, but it is also combined with historical facts and belongs to the special expression of "one thing, one word" "One thing, one word" does not have a digital standard that can blur the content, which also makes the reason unable to be digitized.

There is also a complex expression of "sense" in the form of "metaphor", which requires scholars to understand sense in a higher context without events. However, artificial intelligence is constructed by human beings, and it cannot jump out of the input knowledge range or program content to deeply understand reason. In the context of China, there is a methodological violation between the practice of commenting on characters through historical analogy and the inductive judgment of artificial intelligence. This is enough to show that reason has irreducibility of language and value in original materials and cannot be accepted by artificial intelligence.

(2)

Lack of "self-awareness" in artificial intelligence

Searle conceived a "Chinese room" to refute the existence of "self-awareness" of artificial intelligence. Even if people in the room don’t understand Chinese, they can provide answers to people outside the room through dictionary reference and rule guidance. Searle pointed out that the calculation activities of artificial intelligence are only carried out according to the designed program, and they don’t understand what they are dealing with. The author agrees with Searle’s point of view, and must also admit that a perfect operation model is mainly attributed to the fuzzy processing of data by human experts, and confirms the specific kind of mapping relationship between input information and target information through one or more groups of (x, y) point pairs.

Confirming the mapping relationship requires a lot of data checking. Experienced designers have a more accurate "want to understand" the mathematical formula model describing the (x, y) point pair. The designer takes out the formula and then uses the real data to correct the parameters in the mathematical formula. The essence of this process is to get the appropriate answer through trial and error. Xu Yingjin described it as a process of "foreign apprentices learning Kung Fu from Master China", and the learning of artificial intelligence is to "guess" by virtue of its powerful computing power until it guesses the correct answer that meets this condition.

It can be seen that the learning process of artificial intelligence is very clumsy, and the reason why humans ignore this clumsy learning method lies in the powerful data processing ability of computers. The richer the data or the more complex the model, the higher the requirement for computing power. The reason why the results produced by clumsy learning exceed the accuracy of human experts is precisely based on the high-quality samples provided by a large number of human experts. Artificial intelligence clumsily learns only what it knows, but it doesn’t know why. They only make a fuss about the processing of information on the surface. For example, AI painting, which is popular recently, is still the result of program calculation in essence. Artificial intelligence itself does not have the material for painting, nor does it know what kind of painting can be drawn by its own program operation, not to mention how artificial intelligence can know where this pen should fall when painting.

This situation will lead to another discussion about artificial intelligence, that is, does artificial intelligence have "self-awareness"? Farina believes that the existence of self-awareness in artificial intelligence is based on the fact that artificial intelligence has a minimum "self". However, the justification strategy of academic circles (especially science and engineering) often deviates from this proposition, and regards the behavior selection mechanism of artificial intelligence according to the procedure as the minimum self of artificial intelligence.

There are many such "self-consciousness-subject models" in academic circles, such as CPS(Cyber-PhysicalSystems) model constructed by Dutt et al., which can perceive the environment, analyze and make decisions, and achieve the desired goals within the procedural constraints. Selitskiy made an ANN model of meta-learning supervisor to adjust itself more accurately in the wrong cases of face recognition and facial expression recognition, and so on.

The author thinks that these models are good and play an important role in practice. However, there are still two criticisms about this activity: first, the learning mechanism of artificial intelligence is completely input by the designer, but it is hard for the author to imagine that the learning process of human beings in society-even in an ideal society-is also controlled by a learning mechanism set by the "creator". This actually disproves the perspective positioning of artificial intelligence "He Me". Secondly, on the surface, the actions of artificial intelligence make corresponding behaviors according to environmental changes. In essence, the actions of artificial intelligence completely trigger and run the program that selects the action after meeting certain environmental conditions, and there is still no self-awareness under self-selection.

In this way, artificial intelligence does not have the ability of self-awareness and self-cognition. All its actions are based on pre-written programs, and even deep learning is nothing more than simulating the existing learning process of human beings. The sign that "intelligence" is "intelligence" is that those who are worthy of being called "intelligence" can make creative contributions in areas that have not been fully set foot in by predecessors.

conclusion

The purpose of things determines the structure of things. If the sole purpose of artificial intelligence judgment is not to generate judgment results, artificial intelligence may not face many accusations from the author in this paper. Artificial intelligence can’t carry human emotions, values and other humanistic resources, which means that artificial intelligence should be in an auxiliary position in judicial activities. Human beings have "intelligence" that artificial intelligence does not have. How to make good use of human intelligence to balance the growing needs of a better life is the source of the long-term development of artificial intelligence.

Wonderful review of the past

Zhu Qinghua, Song Shanshan | The judicial application path of generative artificial intelligence from the perspective of risk | The copyright determination of generative artificial intelligence works | Liu Shu | The realistic landing and future exploration of |LLM-type generative artificial intelligence in smart courts | The relationship between guiding cases and judicial interpretation and the interactive path | Kun Li | The boundary between the crime of helping a letter, the crime of concealing secrets and the crime of fraud | On the nature and structure of the debtor’s right to refuse to pay when the performance cost is too high.

Official website, Shanghai Law Society

http://www.sls.org.cn

Keep sliding to see the next one.

Zhu Haochuan and Chen Zhijia | On the Rational Application of Generative Artificial Intelligence Referees Original Zhu Haochuan and Chen Zhijia Touch the Oriental Law of Shanghai Law Society to read the original text

Shanghai Law Society Oriental Law Praise Sharing is reading and writing a message, sliding up to see the next one.

Original title: "Zhu Haochuan and Chen Zhijia | On the Problem of Rational Application of Generative Artificial Intelligence Judgment"